你好,是楼上的 CNN 代码,

import tensorflow as tf

import numpy as np

class CNN (tf.keras.Model):

def __init__(self):

super ().__init__()

self.conv1 = tf.keras.layers.Conv2D (

filters=32, # 卷积层神经元(卷积核)数目

kernel_size=[5, 5], # 感受野大小

padding='same', # padding 策略

activation=tf.nn.relu # 激活函数

)

self.pool1 = tf.keras.layers.MaxPooling2D (pool_size=[2, 2], strides=2)

self.conv2 = tf.keras.layers.Conv2D (

filters=64,

kernel_size=[5, 5],

padding='same',

activation=tf.nn.relu

)

self.pool2 = tf.keras.layers.MaxPooling2D (pool_size=[2, 2], strides=2)

self.flatten = tf.keras.layers.Reshape (target_shape=(7 * 7 * 64,))

self.dense1 = tf.keras.layers.Dense (units=1024, activation=tf.nn.relu)

self.dense2 = tf.keras.layers.Dense (units=10)

def call (self, inputs):

x = self.conv1 (inputs) # [batch_size, 28, 28, 32]

x = self.pool1 (x) # [batch_size, 14, 14, 32]

x = self.conv2 (x) # [batch_size, 14, 14, 64]

x = self.pool2 (x) # [batch_size, 7, 7, 64]

x = self.flatten (x) # [batch_size, 7 * 7 * 64]

x = self.dense1 (x) # [batch_size, 1024]

x = self.dense2 (x) # [batch_size, 10]

output = tf.nn.softmax (x)

return output

class MNISTLoader:

def __init__(self):

mnist = tf.keras.datasets.mnist

(self.train_data, self.train_label), (self.test_data, self.test_label) = mnist.load_data ()

self.train_data = np.expand_dims (self.train_data.astype (np.float32) / 255, axis=-1)

self.test_data = np.expand_dims (self.test_data.astype (np.float32) / 255, axis=-1)

self.train_label = self.train_label.astype (np.int32)

self.test_label = self.test_label.astype (np.int32)

self.num_train_data, self.num_test_data = self.train_data.shape [0], self.test_data.shape [0]

print (self.num_train_data, self.num_test_data)

def get_batch (self, batch_size):

index = np.random.randint (0, self.num_train_data, batch_size)

return self.train_data [index, :], self.train_label [index]

if __name__ == '__main__':

num_epochs = 5 # 1 个 epoch 表示过了 1 遍训练集中的所有样本

batch_size = 50 # 一次迭代使用的样本量

learning_rate = 0.001

model = CNN ()

data_loader = MNISTLoader ()

optimizer = tf.keras.optimizers.Adam (learning_rate=learning_rate)

num_batches = int (data_loader.num_train_data // batch_size * num_epochs)

for batch_index in range (num_batches):

X, y = data_loader.get_batch (batch_size)

with tf.GradientTape () as tape:

y_pred = model (X)

# 交叉熵作为损失函数

loss = tf.keras.losses.sparse_categorical_crossentropy (y_true=y, y_pred=y_pred)

loss = tf.reduce_sum (loss)

print ('batch %d: loss %f' % (batch_index, loss.numpy ()))

grads = tape.gradient (loss, model.variables)

optimizer.apply_gradients (grads_and_vars=zip (grads, model.variables))

sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy ()

num_batches = int (data_loader.num_test_data // batch_size)

for batch_index in range (num_batches):

start_index, end_index = batch_index * batch_size, (batch_index + 1) * batch_size

y_pred = model.predict (data_loader.test_data [start_index: end_index])

sparse_categorical_accuracy.update_state (y_true=data_loader.test_label [start_index: end_index], y_pred=y_pred)

print ('test accuracy: %f' % sparse_categorical_accuracy.result ())

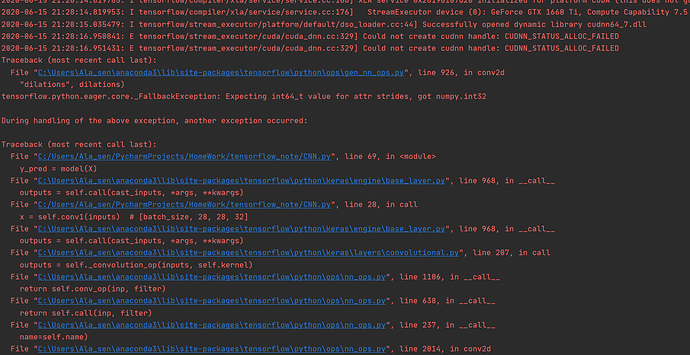

下面是完整报错信息,麻烦看一下